Ishita Kakkar

Computer Science PhD Student

University of Wisconsin-Madison

ikakkar at wisc dot edu

About Me

I am a first year Computer Science PhD student at the University of Wisconsin-Madison, advised by Prof. Junjie Hu. I am investigating safety alignment in large reasoning models through mechanistic interpretability to explain and mitigate jailbroken behaviors. I am fortunate to be supported by the NSF Graduate Research Fellowship (GRFP). I previously obtained my Bachelor's in Computer Science from the University of Massachusetts Amherst (UMass Amherst). I am passionate about creating transparent, interpretable, trustworthy natural language processing (NLP) and machine learning (ML) systems for societal good. Specifically, my research interests lie at the intersection of NLP and Public Interest Technology (PIT). For the former, I have conducted research under the supervision of Mikhail Yurochkin at MIT-IBM Watson AI Lab, Prof. Mai ElSherief at Northeastern University (NEU) and Prof. Hong Yu at UMass Amherst BioNLP Lab. For the latter, I was a 2024 Public Interest Technology New England (PIT-NE) Impact Technology Fellow and Student Leader at the Public Interest Technology - University Network (PIT-UN). I’m currently doing my undergraduate thesis in causal inference under Prof. David Jensen to advance my understanding of explainable AI and interpretability. I am also honored to be a Break Through Tech AI/ML Fellow at MIT, an IDEAS (Intelligence, Data, Ethics And Society) Fellow at NEU, and an Amazon Web Services AI/ML Fellow.

Research Interests

Transparent and Trustworthy AI

To create a trustworthy AI model, the algorithm can’t be a black box — its creators, users, and stakeholders must understand how it works to trust its results. With LLMs deeply integrated into daily life and shaping ideas, I’m interested in researching methods like causal inference, mechanistic interpretability, Explainable AI (XAI), and retrieval-augmented generation (RAG) to explain the predictions of these black-box models. My interest in this area grew after one of my research projects was shelved due to the inability to explain key results, highlighting the critical need for better understanding of LLMs. I aim to advance research in these fields to address such challenges and increase our control over the development of these models.

Responsible AI and Safe Human-LLM Interactions

This interest began after interacting with AI chatbots on Instagram, including an “AI mom” whose preset question was, “My mom doesn’t understand me.” Conversations with various AI personas (e.g., AI dating coach, AI sister, AI best friend) made me reflect on the potential influence these chatbots could have on vulnerable and impressionable users on social media. With the rise of such chatbots, I am keen to research the emotional intelligence of LLMs to ensure safe and responsible human-LLM interactions. Building on my current work on LLM Emotional Intelligence (in submission), I aim to regulate conversations in these nuanced settings. My project with the MIT-IBM Watson AI Lab has further deepened my understanding of model performance across semantic topics by leveraging analysis and topic modeling on datasets like Chatbot Arena.

News

[Nov 2024] Attended the Public Interest Technology Summit 2024 in San Jose as a fully funded scholar [Sept 2024] Paper accepted at the Third Workshop on NLP for Positive Impact at EMNLP 2024 [Sept 2024] Joined the MIT-IBM Watson AI Lab as an AI Studio Research Fellow [Sept 2024] Selected for the Undergraduate Student Council at College of Information and Computer Science [June 2024] Selected for the IDEAS Fellowship at Northeastern [May 2024] Selected as Student Leader at the Public Interest Technology - University Network [May 2024] Selected for the Break Through Tech AI/ML Fellowship at MIT Schwarzman College of Computing [May 2024] Selected as a Public Interest Technology New England Impact Technology Fellow [Apr 2024] Poster presentation at the 30th Massachusetts Undergraduate Research Conference (Mass URC 2024) [Mar 2024] Led workshop at the Voices of Data Science Conference at UMass Amherst 2024 [Feb 2024] Selected as Secretary of the UMass Public Interest Technology Club [Feb 2024] Joined the UMass BioNLP Lab for an independent study under Prof. Hong Yu [Feb 2024] Appointed Head Undergrad Course Assistant (UCA) at the UMass [Dec 2023] Awarded the Amazon Web Services (AWS) AI/ML Fellowship [Sept 2023] Lightning talk at the National Early Research Scholars Program (ERSP) Conference 2023 [Sept 2023] Joined Prof. Mai ElSherief’s lab at Northeastern University [Aug 2023] Research talk at the UC San Diego Summer Research Conference 2023 [June 2023] Selected as a Summer Research Intern at Prof. Mai ElSherief’s lab through the 2023 UCSD Early Research Scholars Summer Program [Jul 2022] Began teaching AP Computer Science for Microsoft Technology Education and Learning Support (TEALS) Program [Sept 2022] Joined the UMass Laboratory for Advanced Software Engineering Research (LASER) as a research apprentice under Prof. Yuriy Brun [Sept 2022] Selected for the 2022 Early Research Scholar Program (ERSP) at UMass Amherst [Sept 2021] Chancellor’s Award recipient at UMass Amherst

Projects

Inferring Mental Burnout Discourse Across Reddit Online Communities Nazanin Sabri1, Anh Pham2, Ishita Kakkar2, Mai ElSharief3 1University of California San Diego, 2University of Massachusetts Amherst, 3Northeastern University What are people talking about mental burnout on social media? Accepted at Third Workshop on NLP for Positive Impact at EMNLP 2024. View Publication

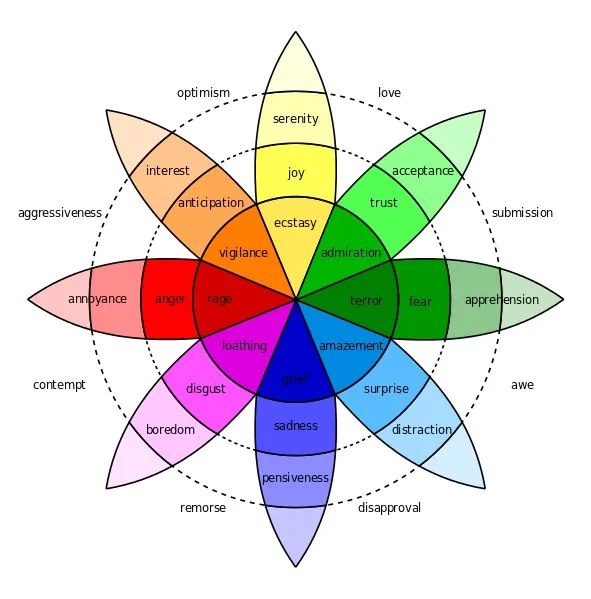

Emotional Bias in Large Language Models Isha Joshi1, Anh Pham2, Ishita Kakkar2, Melissa Karnaze3, Sindhu Venkata Kothe3, Mai ElSherief1 1Northeastern University, 2University of Massachusetts Amherst, 3University of California San Diego Are LLMs emotionally intelligent in their responses? We present EXPRESS, a novel benchmark designed for emotion prediction that serves as a resource for evaluating emotional intelligence in LLMs This work is in submission to the Association for Computational Linguistics (ACL) Rolling Review - August 2024.

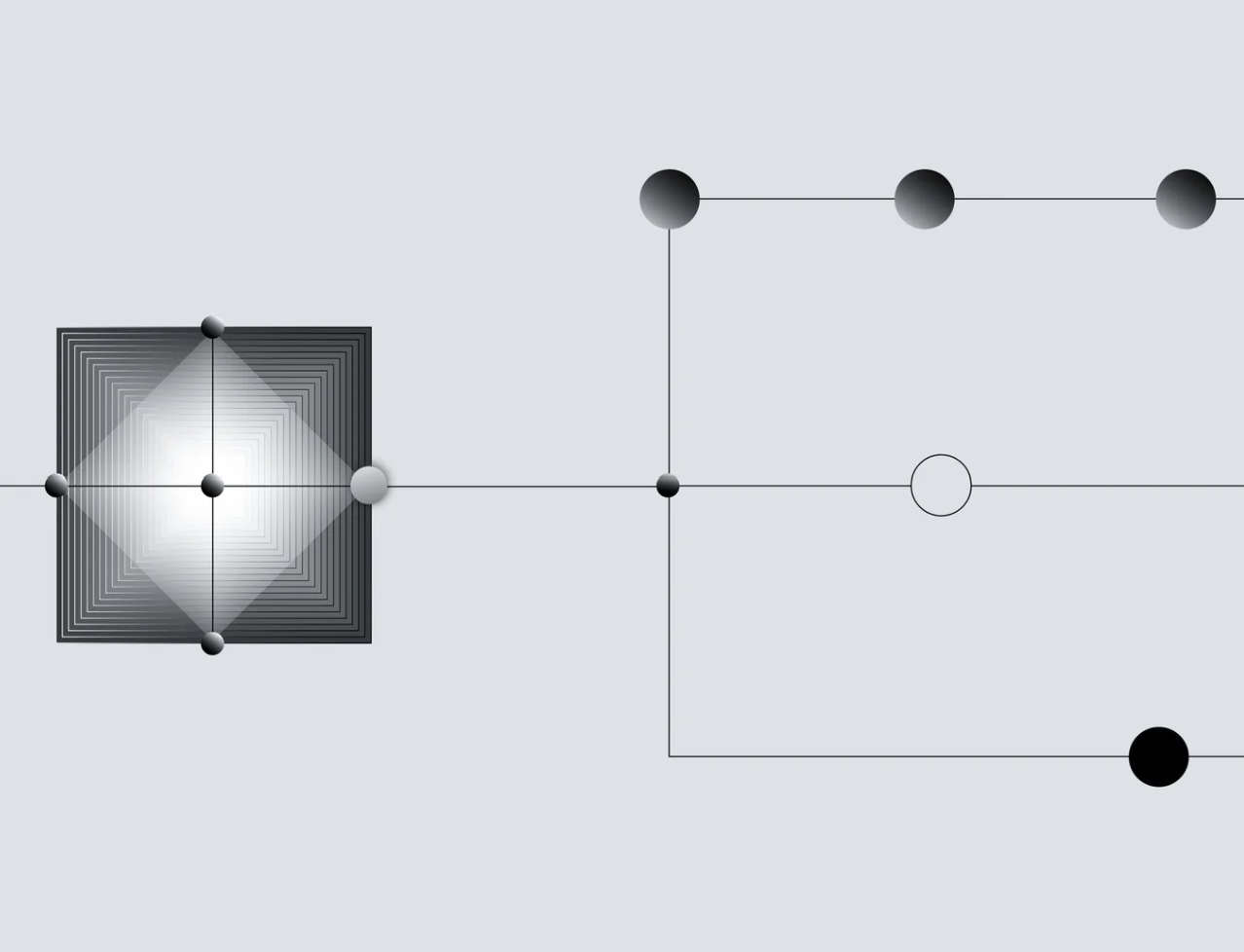

Identifying LLM Strenghths and Weaknesses in Conversation Topics Ishita Kakkar1, Seungmin(Min) Cho2, Tanvi Kandepuneni1, Arushi Agrawal1, My Vo3, Felipe Maia Polo4, Mikhail Yurochkin5 1University of Massachusetts Amherst, 2Boston University, 3Simmons University, 4University of Michigan Ann Arbor, 5IBM Research Identifying strenghts and weaknesses of different-sized LLMs on different conversation topics.

Benchmarking Speech Recognition Models Against Stutter Speech Ishita Kakkar1, Dongim Lee2, Nikhila Peravali1, Qisheng Li3, Shaomei Wu3 1University of Massachusetts Amherst, 1Olin College of Engineering, 3AImpower.org Are speech recognition models biased against impared speech?

Personal

I love graphic design and calligraphy! Check out my work here. I also co-managed a fully student-run on-campus printing and design business, Campus Design & Copy, for 1 year!